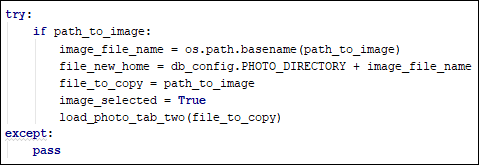

Thus, our model directory can look as complicated as below model ├── model1 │ ├── NaiveBayes │ └── XGBoost │ ├── version_1 │ └── version_2 └── model2 ├── NaiveBayes └── XGBoost ├── version_1 └── version_2 While using each machine learning model, we might even want to save different versions of the model because of the difference in hyperparameters used for the model. While using model 1, we might want to use different types of machine learning models to train our data (‘model1/XGBoost’). For example, we might use ‘model 1’ to specify a specific feature engineering. Sometimes we might want to create nested files to organize our code or model, which makes it easier in the future for us to find them. Now we can access all your files within the ‘data’ directory! Create Nested Files if they do not Exist file_directory = os.path.join( directory, filename) : join the parent directory (‘data’) and the files within the directory.if filename.endswith(".csv") : access the files that end with ‘.csv’.for filename in os.listdir( directory) : loop through files in a specific directory.Here are the explanations for the script above

The script below allows us to loop through files in a specified directoryĭata/data3.csv data/data2.csv data/data1.csv If the only thing we change in the script above is the data, why not use the a for loop to access each data instead? This works but not efficiently when we have more than 3 data. We can try to manually read one file at a time import pandas as pd def process_data(df): pass df = pd.read_csv(data1.csv) process_data(df) df2 = pd.read_csv(data2.csv) process_data(df2) df3 = pd.read_csv(data3.csv) process_data(df3) If we have multiple data to read and process like this: ├── data │ ├── data1.csv │ ├── data2.csv │ └── data3.csv └── main.py I hope you will find them useful as well! Loop through Files in a Directory These tricks have saved me a lot of time while working on my data science projects. Run one file with different inputs using bash for loop.Create nested files if they do not exist.This article will show you how to automatically It can be really time-consuming to read, create, and run many files of data. When putting your code into production, you will most likely need to deal with organizing the files of your code. In this tutorial of Python Examples, we learned how to log messages to a file in persistent storage.Photo by Sincerely Media on Unsplash Motivation The logging appends messages to the file. Logger.critical('This is a CRITICAL message') Logger.warning('This is a WARNING message') Handler = logging.FileHandler('mylog.log') Example 2: Logging Messages to Log File using Handler Output WARNING:root:This is a WARNING message Logging.critical('This is a CRITICAL message')

Logging.error('This is an ERROR message') Logging.warning('This is a WARNING message') Logging.basicConfig(filename="mylog.log") #setup logging basic configuration for logging to a file

Or you may provide the complete path to the log file. As complete path is not provided, this file will be created next to the working directory. In this example, we will set up the logging configuration using basicConfig() function, so as to log the messages to an external file named mylog.log.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed